Writing / Technical / Machine Learning & Advanced Algorithms

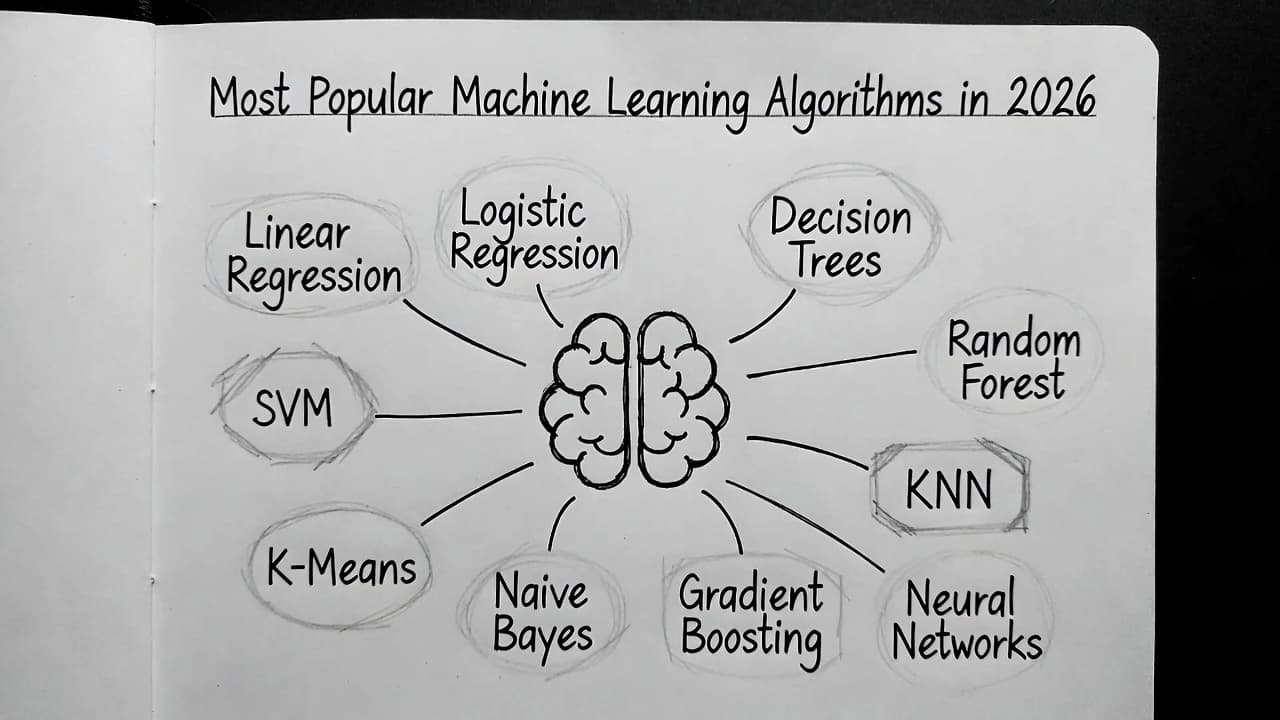

Most Popular Machine Learning Algorithms in 2026

A structured overview of the most widely used machine learning algorithms in 2026, covering supervised, unsupervised, and advanced methods with practical use cases.

Machine learning has become the engine behind countless solutions, from recommendation systems to fraud detection. While new techniques emerge every year, a core set of algorithms continues to dominate industry and research because of their simplicity, reliability, and strong performance on real data.

Here’s a curated list of the most popular machine learning algorithms as of 2026, grouped by learning type, with practical explanations and real-world context.

Supervised Learning Algorithms

These algorithms learn from labeled data to make predictions or classifications.

1. Linear Regression

Predicts continuous values by finding the best-fitting straight line through the data.

Despite its simplicity, Linear Regression remains one of the most important baseline models in production systems. It provides a quick sense of signal strength in the data and is often used as a benchmark before moving to more complex models. Its interpretability makes it especially valuable when stakeholders need to understand feature impact.

In retail and pricing systems, I have seen it used as a first-pass model to estimate demand trends before layering more complex forecasting approaches on top.

Common uses: House price prediction, sales forecasting, trend analysis.

2. Logistic Regression

Models probabilities for binary or multi-class classification using a logistic (S-shaped) function.

Logistic Regression continues to be widely used because it balances performance and interpretability. It works particularly well when relationships are roughly linear in feature space and when calibrated probabilities are important. In many regulated industries, its transparency makes it preferable over black-box models.

In banking and risk systems, it is still a standard choice for credit scoring and fraud detection pipelines where explainability is critical.

Common uses: Spam detection, customer churn prediction, fraud detection, medical diagnosis.

3. Decision Trees

Builds a flowchart-like model of if-then decisions based on data features.

Decision Trees are intuitive and easy to visualize, which makes them a strong tool for understanding how decisions are being made. However, they tend to overfit if left unconstrained, which is why they are often used in controlled settings or as building blocks for ensemble methods.

In practice, I have seen them used to quickly prototype business rules and validate feature importance before moving to more robust models.

Common uses: Credit risk assessment, medical diagnosis.

4. Random Forest

An ensemble of many decision trees that vote or average their predictions.

Random Forest is one of the most reliable “default” models for tabular data. By averaging multiple trees, it reduces overfitting while maintaining strong performance across a wide range of problems. It requires minimal tuning compared to more advanced models and is often used as a strong baseline.

In real-world deployments, I have seen Random Forest used in fraud detection and customer analytics where data is noisy and feature interactions are complex.

Common uses: Classification and regression on messy, high-dimensional data.

5. Support Vector Machines (SVM)

Finds the optimal hyperplane to separate classes, effective even in high-dimensional spaces.

SVMs are powerful when the margin between classes is clear and when data is not too large. With kernel tricks, they can model non-linear relationships effectively. However, they can become computationally expensive at scale, which limits their use in large production systems.

In smaller datasets, especially in text and image classification problems, I have seen SVMs perform competitively with more complex models.

Common uses: Image classification, text categorization.

6. K-Nearest Neighbors (KNN)

Classifies or predicts based on the majority vote or average of the closest training examples.

KNN is simple but surprisingly effective in certain scenarios. It makes no assumptions about the underlying data distribution, which can be an advantage. The downside is that it becomes slow at scale and requires efficient indexing strategies for large datasets.

In recommendation systems, I have seen KNN-style approaches used for similarity-based matching before more advanced models are introduced.

Common uses: Recommendation systems, pattern recognition.

7. Naive Bayes

A fast probabilistic classifier based on Bayes’ theorem with an independence assumption.

Naive Bayes works well even when its independence assumption is not strictly true. Its speed and low computational cost make it ideal for large-scale text classification problems. It is often used as a baseline model in NLP pipelines.

In spam filtering and sentiment analysis systems, it continues to be a strong lightweight option where speed matters more than perfect accuracy.

Common uses: Text classification tasks like spam filtering and sentiment analysis.

Unsupervised Learning Algorithms

These discover patterns in unlabeled data.

8. K-Means Clustering

Partitions data into k groups by minimizing distances to cluster centers.

K-Means is widely used because of its simplicity and scalability. However, it assumes spherical clusters and requires the number of clusters to be defined upfront. Despite these limitations, it remains one of the most practical tools for exploratory data analysis.

In customer segmentation problems, I have seen it used as an initial step to identify broad behavioral groups before applying more refined clustering techniques.

Common uses: Customer segmentation, image compression, anomaly detection.

Advanced & Ensemble Methods

9. Gradient Boosting (XGBoost, LightGBM, CatBoost)

Sequentially builds models where each new one corrects errors of the previous.

Gradient Boosting models are often the top performers for structured data. They capture complex interactions and non-linear relationships effectively. The trade-off is increased complexity and the need for careful tuning to avoid overfitting.

In many production systems, especially in finance and e-commerce, I have seen Gradient Boosting models consistently outperform simpler approaches when properly tuned.

Common uses: Kaggle competitions, ranking tasks, structured/tabular data problems.

10. Neural Networks & Deep Learning Basics

Multilayer perceptrons, CNNs for images, and RNNs or LSTMs for sequences.

Deep learning models dominate unstructured data problems such as images, text, and audio. They require more data, compute, and engineering effort, but they unlock capabilities that traditional models cannot achieve.

In real-world deployments, I have seen deep learning used where feature engineering alone cannot capture the complexity of the data, particularly in computer vision and NLP systems.

Common uses: Computer vision, natural language processing, speech recognition.

Quick Tips for Practitioners

- Beginners: Start with Linear or Logistic Regression, Decision Trees, and Random Forest. These build intuition quickly and are widely supported.

- Industry reality (2026): Tree-based ensembles and Logistic Regression still handle most tabular data tasks exceptionally well. Deep learning becomes essential for unstructured data.

- Choosing the right model: Focus on data type, interpretability needs, dataset size, and business constraints. Always validate with cross-validation and real-world metrics.

These algorithms form the foundation even as transformers and specialized models advance. In practice, strong systems are rarely built on a single model. They combine simple, reliable components that work well together.

If this made you think, feel free to leave a ❤️